Well, yeah!

I created a quick Console application and set up a linear function call like so:

Stopwatch stopwatch = new Stopwatch();

stopwatch.Start();

GetNorthwindCustomers();

GetAdventureWorkWorkOrders();

stopwatch.Stop();

Console.WriteLine(string.Format("Elapsed Time: {0} milliseconds", stopwatch.ElapsedMilliseconds.ToString()));

And set up 2 data objects – I used Entity Framework for Northwind and LINQ to SQL for Adventure works

static void GetNorthwindCustomers()

{

ConsoleColor currentColor = Console.ForegroundColor;

Console.ForegroundColor = ConsoleColor.Red;

NorthwindEntities dataContext = new NorthwindEntities();

var customers = (from c in dataContext.Customers

select c).OrderBy(c => c.CompanyName);

for (int i = 0; i < 50; i++)

{

foreach (Customer customer in customers)

{

Console.WriteLine(string.Format("CustomerID: {0} – CompanyName: {1}", customer.CustomerID, customer.CompanyName));

}

}

Console.ForegroundColor = currentColor;

}

And

static void GetAdventureWorkWorkOrders()

{

ConsoleColor currentColor = Console.ForegroundColor;

Console.ForegroundColor = ConsoleColor.Blue;

AdventureWorksDataContext dataContext = new AdventureWorksDataContext();

var workOrders = (from wo in dataContext.WorkOrders

select wo).OrderBy(wo => wo.StartDate);

foreach (WorkOrder workOrder in workOrders)

{

Console.WriteLine(string.Format("WorkOrderId: {0} – StartDate: {1}", workOrder.WorkOrderID, workOrder.StartDate.ToString()));

}

Console.ForegroundColor = currentColor;

}

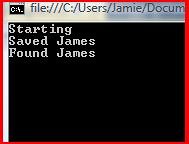

After running it, I get the following result:

I then added the AsParallel to the data context like this:

var customers = (from c in dataContext.Customers.AsParallel()

var workOrders = (from wo in dataContext.WorkOrders.AsParallel()

and ran it

The results were:

Two questions came to mind:

· Why was there a performance gain?

· The database calls still ran in sequence. How can I get them to run simultaneously?

I decided to tackle the second question 1st – leaving the 1st as an academic exercise to be completed later.

I realized immediate that the database calls were running in sequence because, well, they were being calling in sequence:

GetNorthwindCustomers();

GetAdventureWorkWorkOrders();

To get the database calls to run in parallel, I needed to use Parallelism at that level of the call stack.

I first tried to add both function calls to a Task:

stopwatch.Start();

Task taskNorthwind = Task.Factory.StartNew(() => GetNorthwindCustomers());

Task taskAdventureWorks = Task.Factory.StartNew(() => GetAdventureWorkWorkOrders());

stopwatch.Stop();

The funny thing is – the stopwatch ran immediately and the 2 other tasks ran after that. So I need a way to only running the database calls in parallel, not the stopwatch.

I then tried the Parallel.Invoke method like so:

Parallel.Invoke(GetNorthwindCustomers);

Parallel.Invoke(GetAdventureWorkWorkOrders);

And I got the same result as a linear call:

So then I realized I should put both tasks in the same Parallel call:

Parallel.Invoke(GetNorthwindCustomers, GetAdventureWorkWorkOrders);

Now the different commands in each function are running in parallel – like the console color. However, the database calls are still operating as a single block. I then decided to test this hypothesis by putting a Thread.Sleep in the first database fetch.

What do you know, the database calls do interleave – if given enough time:

I did notice that no matter what I did with the tasks, the actual sequence from the LINQ was the same (because of the orderby extension method). That is good news for programmers that want to add parallelism to their existing application and rely on the order from the database (perhaps for the presentation layer).

All in all, it was a very interesting exercise on a Saturday AM. I ordered

I wonder if there are new patterns that I will uncover after reading this.